Building Spark Core firmware locally

I have recently started a couple of projects based on the great Spark Core board. Hopefully I will be able to talk about them here soon.

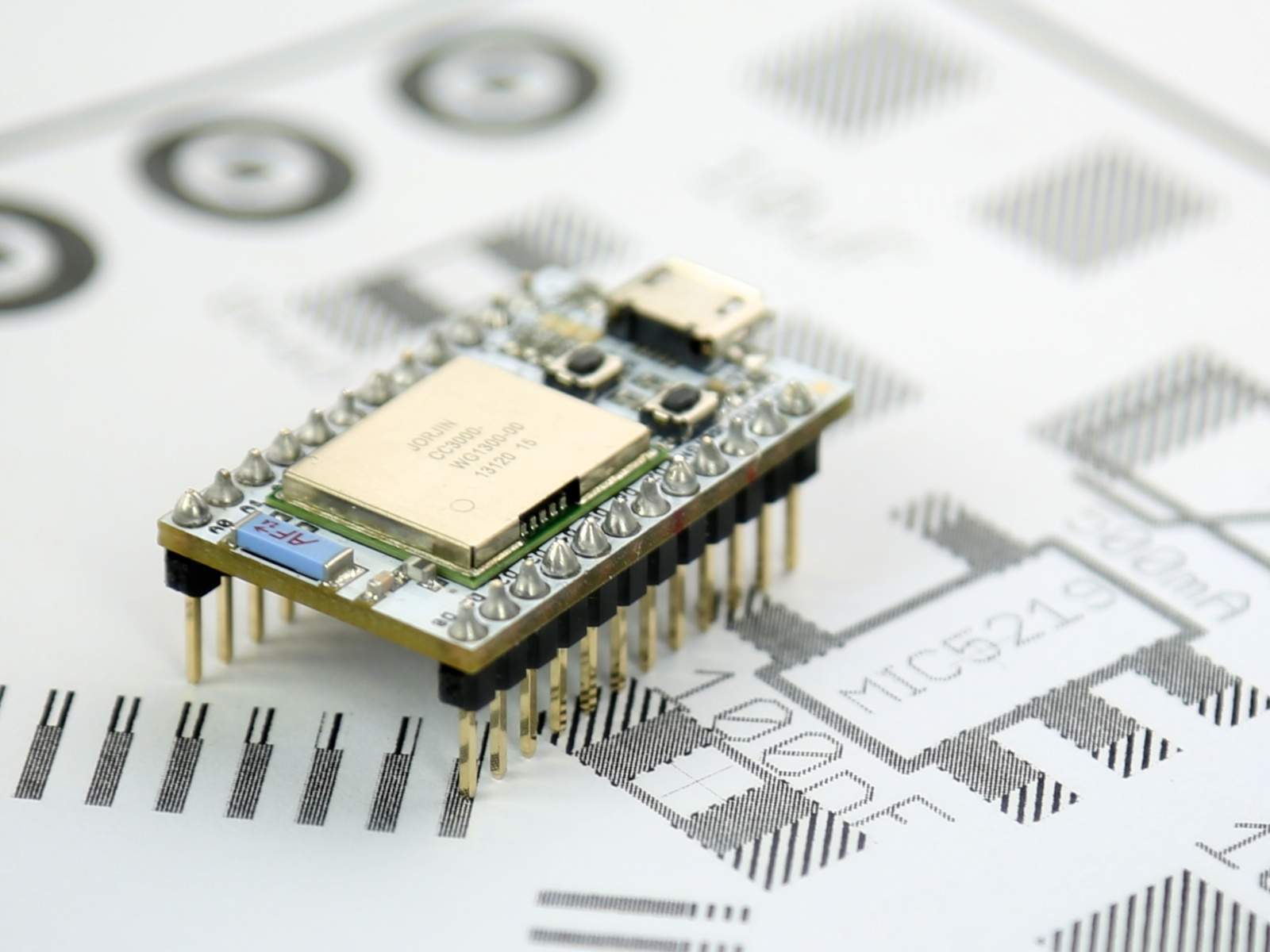

The Spark Core is a development board based on the STM32F103CB, an ARM 32-bit Cortex M3 microcontroller by ST Microelectronics (the same you can find in the new Nucleo platform by ST) that was crowd-funded through a kickstarted campaign. But there are some big things about this board.

-

First, it comes with a CC3000 WIFI module by Texas Instruments, which is the shiny new kid on the block. Adafruit has the module available in a convenient breakout board.

-

Second, it is Arduino compatible in the sense that it implements the wiring library, that is, you can port Arduino projects to this board with little effort.

-

Third, Spark.io, the company behind the Spark Core, has developed a cloud based IDE you can use to write your code and flash it to your Spark Core Over the Air (OTA).

-

And fourth, you can communicate with your Spark Core with a simple API through the cloud, exposing variables and invoking methods.

It is really awesome, and it works!

Now there are similar solutions, even for the Arduino platform, like codebender.cc, wifino.com or the mbed Compiler, but they don't pack all the features Spark Core does, and the hardware they require is more expensive and sensibly bulkier.

The team at Spark.io is very involved and active so I foresee a great future for their solution, but there are still lots of things to polish. They are facing some criticism due to the fact that the Spark Core has to be connected to the cloud in order to work, that means it needs a WIFI connection and Internet access. Besides, that also means that all the communications have to pass through Spark.io servers. But this will soon be only optional. On one hand they are refactoring the code to remove the requirement for the Spark Core to be connected at all, and on the other hand they are about to release their cloud server under an open source license (the firmware is open source already) so you could have your own cloud in your own server. The release of their cloud server will be a major event. The Spark Core strength lies on the possibility of flashing it remotely and the API to execute methods and retrieve values.

In the mean time I have to say that building and flashing the code remotely is slow… If you are like me and want to code small iterations and build frequently it is a bit frustrating… But since the firmware is open you can build it locally.

The Spark Core firmware is split into 3 different repositories: core-firmware, core-common-lib and core-communication-lib, totaling almost 500 files and more than 336k lines (including comments, makefiles,…). It is big. To build your own firmware you have to modify/add your files to the core-firmware project. This means having copies of this repository for every single project you are working on. And this repository alone is +187k lines of code. If you use a code versioning system for your code (GIT, SVN,…) you have two main options: forking and have big repositories or ignoring a bunch of files (but having the whole firmware code in the working copy, presumably outdated). Not good.

So I decided to go the other way round. Projects with just the minimum required files and a way to transparently “merge” and “unmerge” a single core-firmware folder with my project. I have created a small bash script that does that and allows you to build and upload the code from the command line without leaving you project folder.

I don't know if I am reinventing the wheel but “my” wheel is making me save a lot of time. I can manage my build from within my favourite IDE (vim) keeping the main core-firmware folder clean and up to date.

The code is in the spark-util repository at bitbucket. The README file explains the basics of it and provides some use cases.

Any comment will be very welcomed.

"Building Spark Core firmware locally" was first posted on 22 February 2014 by Xose Pérez on tinkerman.cat under Code and tagged build, ide, sparkcore.